Support our independent tech coverage. Chrome Unboxed is written by real people, for real people—not search algorithms. Join Chrome Unboxed Plus for just $2 a month to get an ad-free experience, access to our private Discord, and more. Learn more about membership here.

START FREE TRIAL (MONTHLY)START FREE TRIAL (ANNUAL)

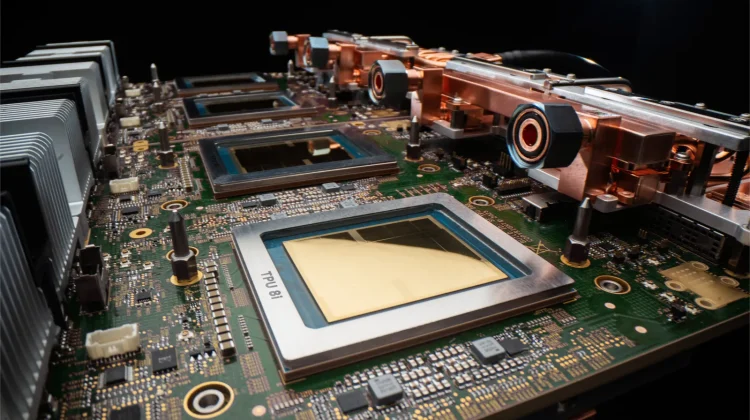

For years, Google’s Tensor Processing Units (TPUs) have been the silent workhorses behind the scenes, powering everything from basic search to the massive Gemini models we use today.

But as we move from chatbots to Agentic AI that can autonomously plan and execute multi-step tasks, the hardware requirements are changing. To meet this moment, Google has introduced a dual-chip approach with its eighth-generation hardware: the TPU 8t and the TPU 8i.

TPU 8t: The Training Powerhouse

The “t” in 8t stands for training. This chip is built for the brute-force intensity of developing frontier AI models. Google’s goal with the 8t is to reduce model development cycles from months to weeks.

- Massive Scale: A single TPU 8t superpod can now scale to 9,600 chips, offering nearly 3x the compute performance per pod compared to the previous generation.

- Linear Scaling: Thanks to the new Virgo Network fabric, these chips can scale up to a million units in a single logical cluster with near-linear performance gains.

TPU 8i: the inference reasoning engine

The “i” stands for inference—the act of actually running the AI once it’s trained. This is the chip that will power the “swarms” of agents Google envisions for the future.

When an AI agent has to think through a complex problem, latency is the enemy. The TPU 8i is designed to eliminate that waiting by pairing 288GB of high-bandwidth memory with 3x more on-chip SRAM than the previous generation. This allows the model’s active working set to stay entirely on-chip, delivering 80% better performance-per-dollar for businesses serving AI at scale.

The ‘Axion’ connection

For the first time, both of these chips are running on Google’s own Axion ARM-based CPU hosts. By owning the full stack, from the CPU host to the accelerator to the liquid-cooled data center, Google can optimize energy efficiency in a way that off-the-shelf components simply can’t match.

These 8th-gen TPUs deliver up to two times better performance-per-watt than the previous “Ironwood” generation. In an era where data center power is a primary constraint, this efficiency is just as important as raw speed.

When we’ll see the results

Both the TPU 8t and 8i will be generally available later this year as part of Google’s AI Hypercomputer stack. For the average user, this means the Gemini-powered features in Workspace and on your devices are about to get a lot faster, more responsive, and significantly more capable of handling complex tasks on your behalf.

SUBSCRIBE TO UPSTREAM

Get Chrome Unboxed delivered straight to your inbox

Upstream is our flagship, curated newsletter with the top stories, most click-worthy deals, giveaways, and trending articles from Chrome Unboxed sent directly to your inbox a few times a week. Join 31,000+ subscribers.