Support our independent tech coverage. Chrome Unboxed is written by real people, for real people—not search algorithms. Join Chrome Unboxed Plus for just $2 a month to get an ad-free experience, access to our private Discord, and more. Learn more about membership here.

START FREE TRIAL (MONTHLY)START FREE TRIAL (ANNUAL)

You already know that Google uses machine learning algorithms to filter spam out of Gmail, to make Google Maps capable of the high level of accuracy it maintains, and more, but did you know that it’s also now being used to detect whether or not a website’s notification prompts are being abused? If you read our coverage just a few days ago, we covered this, but Google has now revealed that it’s performing these ML predictions entirely on your device!

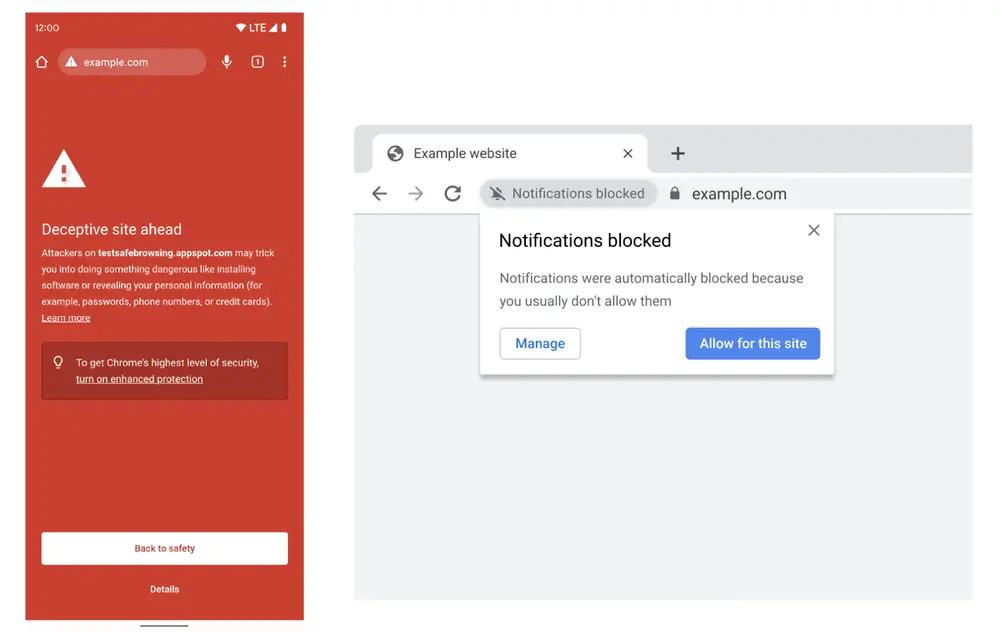

This means that your data stays private and never leaves your Chromebook, phone or tablet, making Safe Browsing, well, safer than ever. With the next release of Chrome, The following is what you’ll see if a phishing attempt is detected. This new ML algorithm is actually 2.5 times better at identifying potentially malicious sites and phishing attacks compared to the previous model.

To help people browse the web with minimal interruption, Chrome predicts when permission prompts are unlikely to be granted based on how the user previously interacted with similar permission prompts, and silences these undesired prompts.

The Keyword

Additionally, if a user has a history of denying permission requests from a specific website, Chrome will learn this and automatically block them. Luckily, you’ll still be able to manually grant permission by clicking the “quiet” prompt shown in the Omnibox – you know, just in case the machine learning didn’t get things right.

My question to you is this – how much control are you okay with “the algorithm” having over your browsing? Sure, it’s helpful in instances like this, but at some point, it’s probably not going to understand nuances of the human experience like a human would. My hope is that features like this remain optional and that they can be toggled off for those who aren’t interested, but anything related to privacy and security usually end up being mandatory and on by default since Google’s decisions often indicate that it can’t rely on people to protect themselves. I mean, it’s often not wrong (how many people still didn’t turn on 2FA for their Google Accounts and then the company had to force it on, right?) but at what point should it not cross into decision-making for the user?

SUBSCRIBE TO UPSTREAM

Get Chrome Unboxed delivered straight to your inbox

Upstream is our flagship, curated newsletter with the top stories, most click-worthy deals, giveaways, and trending articles from Chrome Unboxed sent directly to your inbox a few times a week. Join 31,000+ subscribers.